Eduard Telik

SOUND ENGINEER ▫ MUSICAL DIRECTOR ▫ COMPOSER ▫ SOUND DESIGNER

||

GAME AUDIO

PROJECTS WORKED ON:

- PLATFORMS:

PC, XBOX One - ENGINES:

UNITY, UNREAL, SOURCE - MIDDLEWARE:

FMOD - PM:

GIT, SVN, KANBAN, SCRUM - SCRIPTING:

C# - ROLES:

Lead Audio Designer, Sound Designer, Composer, Q&A

DISREPAIR

(2023)

Source Engine – Total conversion mod – Sci-fi horror

Roles: Lead Audio, Q&A

- Creation of sound effects

- Integration of sound effects and soundscapes

- Dialogue post-processing

- Music composition

- Trailer creation (audio and visuals)

More…

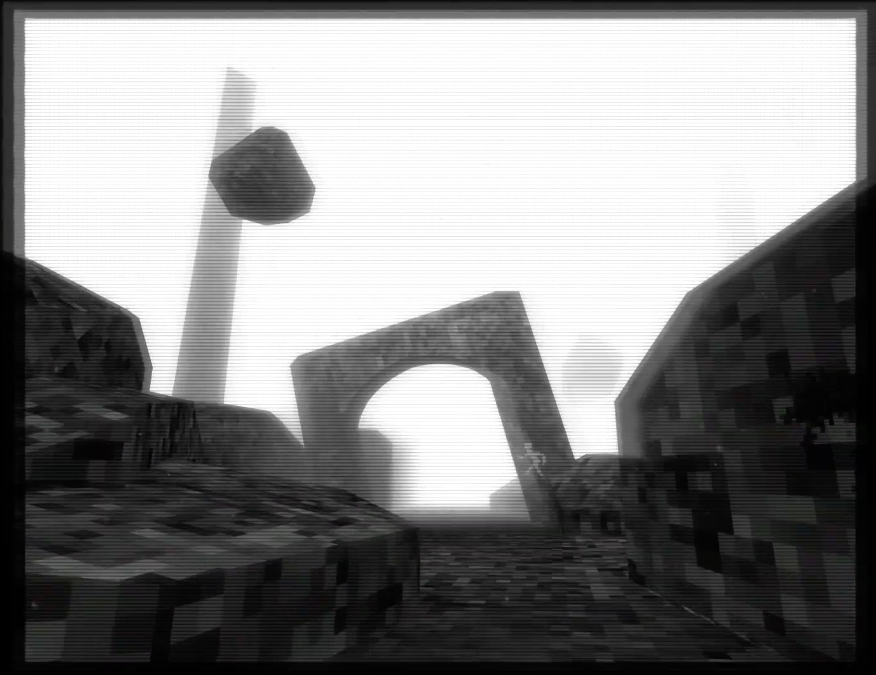

DISREPAIR is a short sci-fi horror exploration game based in the Half-Life universe. As a modification of Half-Life 2, it runs on the Source engine and has a total playtime of about 30 minutes. The development cycle was six months.

Originally I have been approached by the lead developer to compose original music for the project. After seeing the first gameplay builds, I noticed that while models and textures have been completely redesigned to create a very specific art style, unlike what one would see in a typical Source mod, the sound effects were mostly taken from the Source engine. This juxtaposition brought forward the idea to recreate every sound effect that was needed for this game from scratch, bringing it even closer to a total conversion mod.

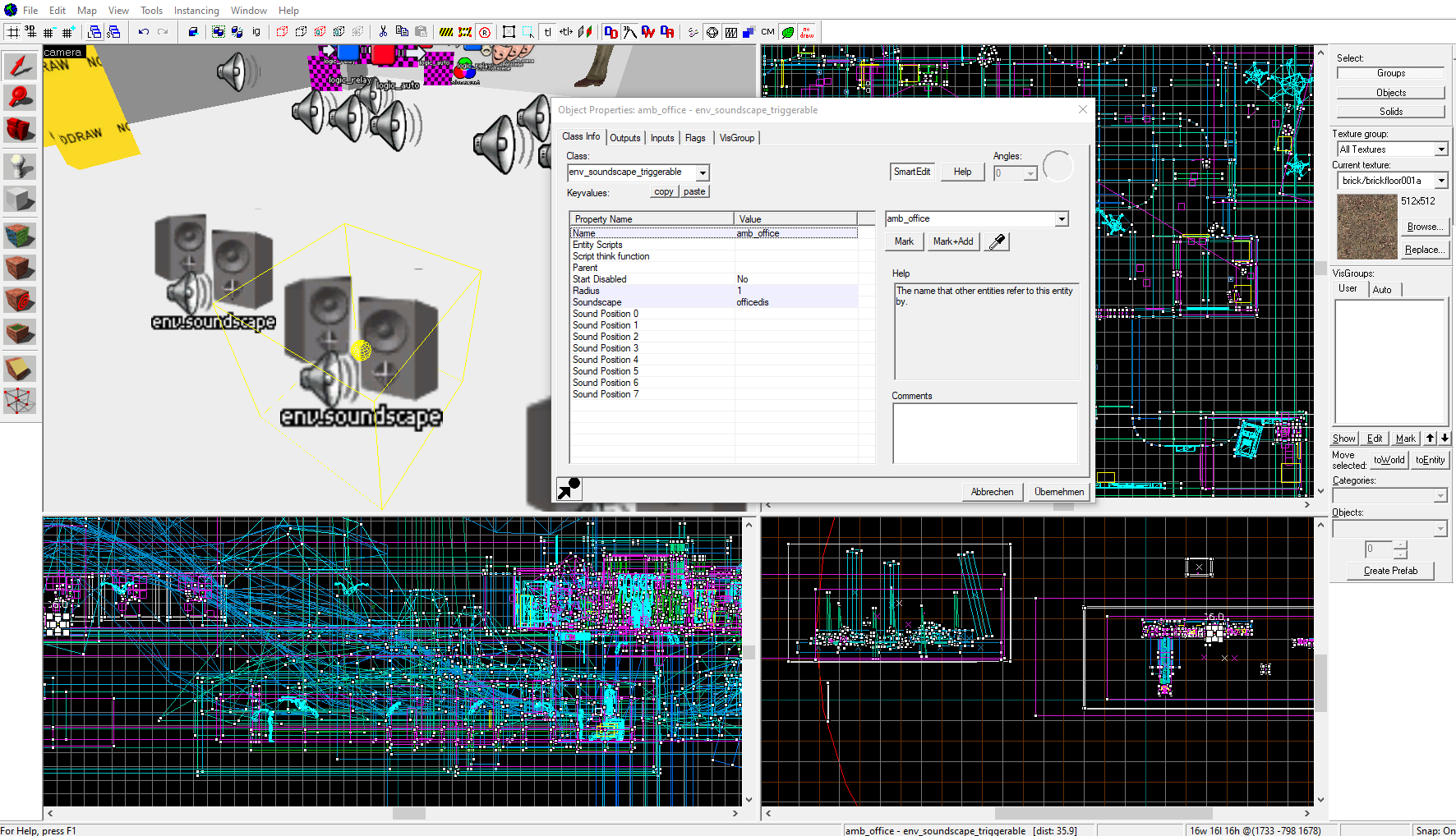

WORKING WITH THE SOURCE ENGINE

On the technical side, the capabilities and limitations of Source’s audio system had to be understood, so that asset creation and implementation would work flawlessly. A batch of sound effects typical for FPS games, like footsteps for different surfaces, physic objects or UI sounds, have already been implemented. Those could simply be replaced by a new file with the same name, path and format. During that process, certain undocumented behavior of the engine has been discovered, like playback issues with the BWF (Broadcast Wave Format), which most professional audio software by default uses when exporting a .wav file. For other sound effects, additional implementation in the level editor or the utilization of Source’s soundscape system was necessary.

AMBIENCE & SOUNDSCAPES: SETTING THE MOOD

Soundscapes offer a simple way of implementing dynamic ambience inside the Source engine. Next to the technical groundwork, the artistic and emotional part of creating ambience had to be addressed. As the player roams through the abandoned hallways, without any NPCs in the entire game, the soundscapes should evoke the opposite feeling: The feeling of not being alone in that darkness, the slight paranoia that seeps into the players mind, that there MIGHT be something around the next corner. This was achieved by layering multiple base ambience loops for different areas with specific sound events, that would be played at random intervals. Those sound events were carefully designed, some imitating key sounds of the player, while others being complete sub-stories of their own.

Example A: Player using the camera and the soundscape alternative.

Example B: Player slush footsteps and soundscape event.

Example C: Unknown creature roaming around.

SOUNDTRACK: BREAKING THE ENGINE LIMITS

DISREPAIR’s soundtrack consists of only a few tracks of dark industrial ambient. Here again, the limitations of the Source engine resulted in specific choices in regards to designing the music: Audio files could only be seamlessly looped with the .wav format, but exceeding a specific file size would cause the music to not play at all. And for a more complex ambient track with enough tension and alleviation points, the track had to have a certain minimum length. The solution was to use an overlap approach instead of a seamless one: Having the track fade out for some seconds while another iteration would be started. And because dark ambient music doesn’t rely on a strict tempo, slight timing variations in the overlap would not cause any noticeable issues.

TESTING, TESTING AND… MORE TESTING

As the Audio Lead for this project, testing the implemented audio features were obviously in my purview. And although my focus has been on audio during playtesting sessions, I also have gathered feedback on bugs, issues and game/level design choices as well, and contributed to polishing the experience for the player as a whole. This interdisciplinary work is usual, if not necessary, in small scale teams and projects, and leads not only to the improvement of the project itself, but also to the personal advancement of the team members. For example: Though I often contributed to the audio aspects of a trailer for a game or product, for DISREPAIR, it was a first time for me to take the whole teaser production into my own hands.

STRANDED STATION

(2024)

Unity Engine – VR Puzzle Game

Roles: Lead Audio

- Music composition

- Sound design

- FMOD implementation

- Unity scripting

- Audio team management

More…

Stranded Station is a short VR puzzle experience developed in the Unity engine. The development team consisted of around 15 university students, who worked on this project for around 3 months. The audio department was made up by three people, including myself in the role of the Audio Lead.

TAKING RESPONSIBILITY

As the audio team consisted of people with different backgrounds and skill levels, tasks had to be split up appropriately. The main task for the other two members consisted of dealing with the entire voice over department: Acquiring voice actors (or voice acting on their own), recording, editing and delivering the unprocessed voice lines for later integration. Additionally, a few extra audio assets, like sounds and music playing from a radio, were created. The rest of the audio tasks were in my hands: Creation of music and the majority sound effects, implementation in FMOD and additional coding in Unity.

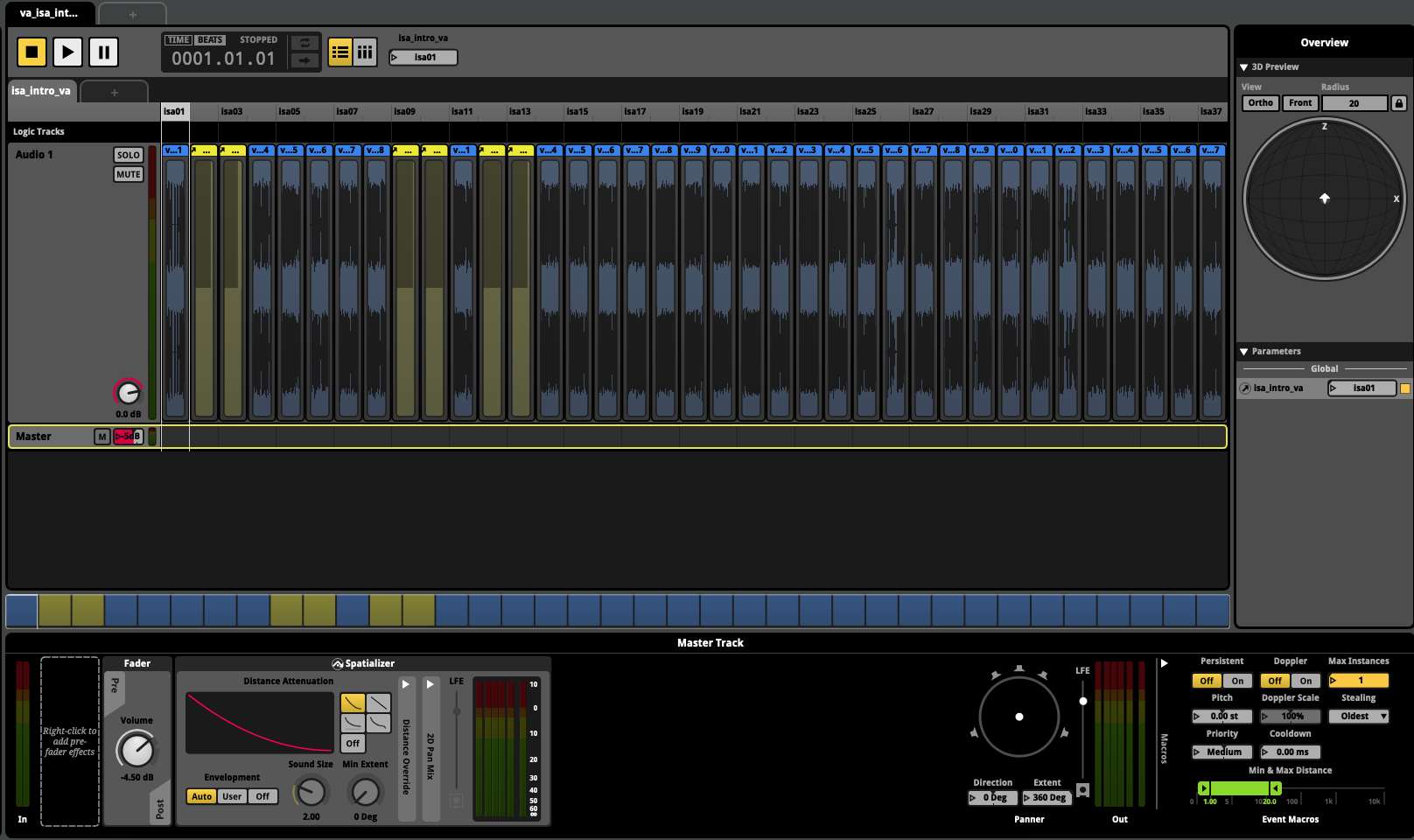

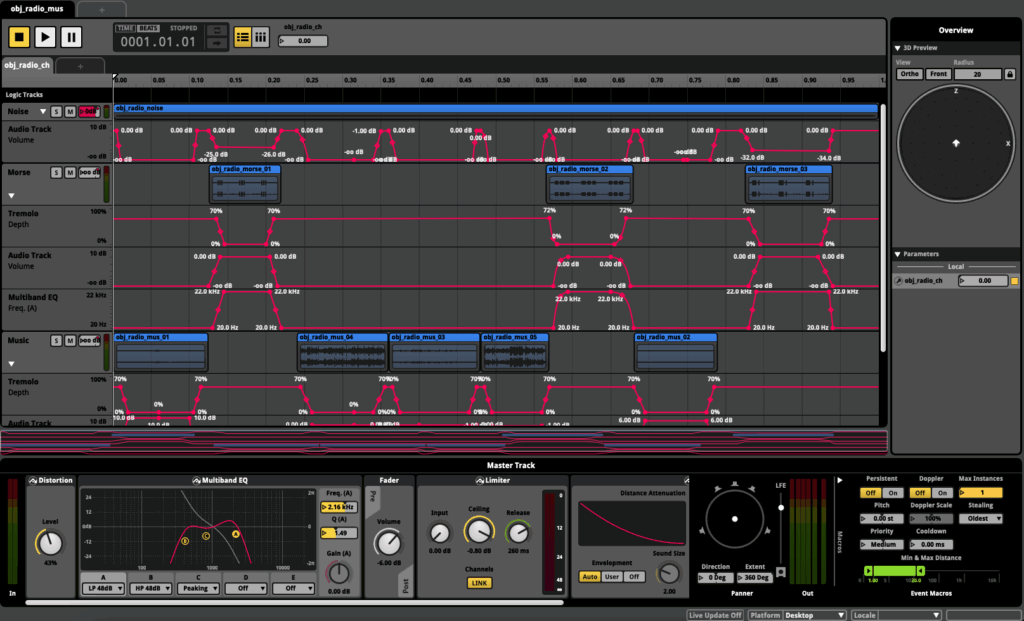

MUSIC AND FMOD

Using an audio middleware like FMOD for a project can add great advantages to the audio development. Not only can sound designers and composers work more intuitively and test events and functionality, it also alleviates the workload for coding and implementation: Complex audio behavior can already be designed in FMOD, while in code only a single parameter value has to be handed over to the FMOD event. One example in Stranded Station was the background music, whose intensity could be controlled dynamically instead of the buildup being a linear exported audio file. Another example would be a complex in-game radio, where multiple layers of different audio sources needed to be faded in and out, and processed with real-time effects. I cannot start to image someone having to program and fine tune this behavior entirely in code.

SOUND DESIGN CHALLENGES

It is no secret, that a VR game lives of interactivity, more specifically interaction with physic objects. Stranded Station’s small level contains over 20 different physic objects: Mugs, screwdrivers, books, tapes… and each type has it’s own physics interaction sounds, which have been recorded specifically for this project. Object collision sounds have multiple variants and dynamic layers, which are dependent on the objects velocity at impact, so the player has an audible difference if they carefully put an object down or throw it around. Additionally, each object has it’s own grab sounds with round robin samples. Some specific objects also emit a sound, when shaking them.

UNITY SCRIPTING

Even with a powerful tool as FMOD at your hands, some specific audio applications could not be realized without additional coding. The shake mechanic for example doesn’t work by detecting velocity, but rather by detecting sudden directional changes in the velocity. This was rigorously explored and tested with reference to real life physics: Measuring both linear and angular velocity of a VR controller, while holding an actual object (like a spray bottle filled with water), and recognizing patterns, at which the real life object would emit what kind of sound.

Another example would be the entire voice over playback and subtitle system. The voice lines wouldn’t just be played randomly, but were mostly reactive to the player’s actions, as they would have to scan an object to get a specific voice line. This resulted in a somewhat complex “dialogue” tree, that also needed to have the capability to be sometimes interrupted by voice lines, that would be triggered by other, non-player related events.

INTERSTELLAR MARINES

(ONGOING)

Unity Engine – Sci-fi shooter – Ongoing development

Roles: Sound Designer

- Sound design

- Music composition

- Unity integration

- Audio feature implementation

More…

While the game has been in development since 2005, my involvement with Interstellar Marines started around 2016. At this point, most of the original development team has left the project, leaving only the project lead and a few volunteer developers to continue on the game.

SOUND DESIGN TASKS

As a lot of audio content for the 2016 state of the game was already implemented, it fell upon me to deal with the audio asset production for upcoming features and updates: New weapons, objects and NPCs were in need of sound design, that would still fit the overall sound aesthetic and workflow that was set by the previous sound designer. Some older sounds were in dire need of a rework, due to upcoming changes for models and animations. And some special holiday events required quick and working results outside of the regular development cycle of the bigger updates.

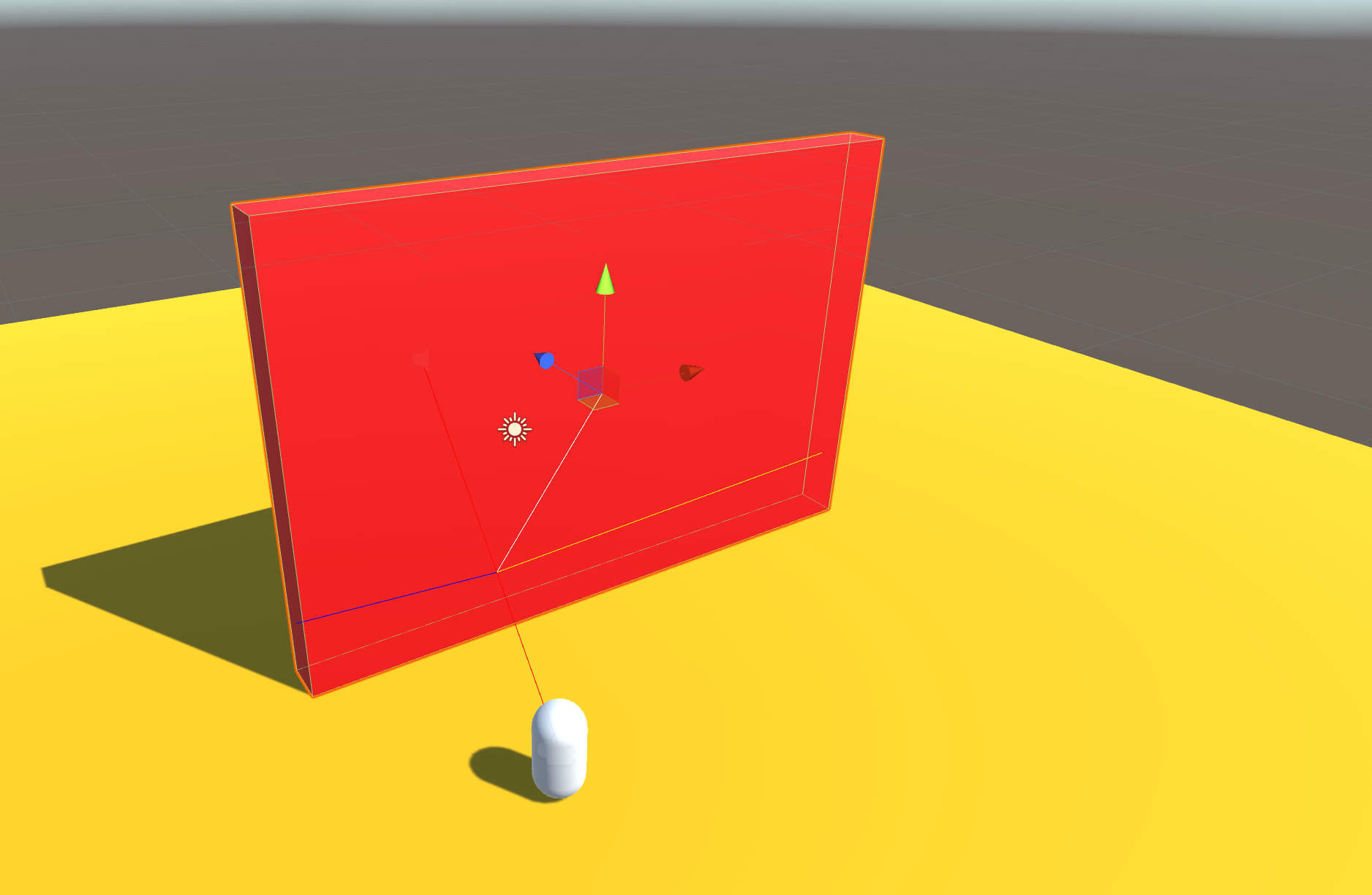

UNITY CODING

Next to asset creation, I’ve also got involved in conceptualizing and prototyping new audio features, to increase realism, immersion and 3D-perception of sound. One of those projects was the development of an audio occlusion and diffraction system only using Unity’s audio system. The first concept and iteration was easy to handle: A single raycast that would check, if there is an object between the head of the player and the sound emitting object, and control a simple lowpass filter. The frequency of said filter could simply be dependent on the material of the occluding object. This is where prototyping already started to become a little difficult: Unity only allows one lowpass filter component on an audio object – some audio object already had a lowpass filter on them for other purposes and features – a blatant source for bugs. Before starting to completely unravel the current audio system just to test a prototype, I choose to script a simple digital biquad filter, which made it possible to add an additional lowpass filter to any audio object.

With the next iteration of the occlusion prototype, I wanted to create something that was a bit more dynamic and realistic at the same time: Instead of having a fixed cutoff frequency, I wanted to simulate sound diffraction around corners. After finding an occluded object, this script would try to find the closest corner of the occluding object and calculate the filter frequency according to the position of the player, the emitting object and the nearest corner. This was done by sending out additional raycasts from the player at increasing azimuth angles (both positive and negative), and checking, if this new path would be occluded as well. While creating an impressive and realistic effect for prototyping, this method would be too encumbering on the CPU, as too many raycasts would be required for multiple occluded objects, even when only checking horizontally, within 10° steps, and only every second.

MUSIC

Next to sound effects, the usage of music has been an ongoing discussion during development. While the original composer has provided a handful of tracks, they mostly were designed and used for cinematics and cutscenes. The actual gameplay didn’t use any non-diegetic music, as it was deemed too distracting from the premise of a realistic and immersive tactical shooter: The biggest fear for the Lead Developer was having an “orchestra in a backpack” scenario. Therefore, I’ve sketched out some ambient music tracks diverging from the original OST, designed to enhance the atmosphere of certain gameplay situations. This was also the starting point of prototyping a dynamic music system in Unity, to better utilize those music tracks in future updates.

Outside of in-game music, additional tracks have been composed to use in promotional material, fitting the style and genre of the previous composers work.

CLASH

(2015)

XBOX One – 2D Arena Fighter – Indie game

Roles: Composer & Sound Designer

- Music composition

- Sound design

More…

CLASH is a 2D Arena Fighter developed for the XBOX One. It was featured during the XBOX Indie section at the E3 in 2015 and released in the same year.

SOUNDTRACK

The music for CLASH is comprised of six tracks: One Main Menu theme, one scoreboard theme and four level tracks. The core instrumentation is a combination of orchestral strings, brass, and woodwinds and heavy orchestral drums & percussion, with a few synthetic sounds and effects thrown in the mix – a typical orchestration for the fantasy action genre. The four level tracks use additional instruments for their main motifs, highlighting the corresponding level and the playable character associated with it. The level for the Panda character for example uses a Guzheng as it’s lead instrument, while the Lion character’s theme uses a heavy brass fanfare.

For the programmers working on this title, each track was separated into two files: A short file featuring the introduction of the track and the main file, which contained a seamlessly looped version of the track. That way, the tracks could be played with an intro part and still being looped without issue. Additionally, a third render of each track was made for the Soundtrack release, where the entire track, including intro, is played and simply fades out after a repetition.

SOUND DESIGN

Similar to the music, the core of the sound design for this game centered around creating unique and recognizable sounds for the playable characters. This was achieved with a blend of self-performed voice acting, additional foley and voice processing. Most other sounds were designed to supplement gameplay elements and player actions accordingly.

Testing the sound effects and mixing for the game proved to be a challenge. At that time, only the main development team had access to an XBOX with an appropriate dev-kit, which meant that I had to find alternative ways to balance and fine-tune assets before delivering them. Because I used raw gameplay footage as basis for my DAW projects anyways, I could tweak and balance multiple sound effects with visual guidance. Later iterations of mixing could be performed and evaluated by gameplay recordings with my sounds included, sent to me by the main development team.